HDFS (Hadoop Distributed File System) has become synonymous with Big Data storage. Its ability to scale seamlessly across commodity hardware and efficiently manage massive datasets has made it a cornerstone of the Big Data ecosystem. However, for all its strengths, HDFS isn’t a one-size-fits-all solution. This blog post delves into scenarios where alternative storage solutions might offer a better fit, ensuring optimal performance and cost-efficiency for your specific data needs.

The Achilles’ Heel of HDFS: Latency and Random Reads

HDFS excels at storing and processing large datasets in a batch-oriented fashion. Its strength lies in sequential reads,where data is accessed in contiguous blocks. However, when it comes to low-latency access or frequent random reads,HDFS falters. The distributed nature and block-based architecture introduce overhead that translates to slower response times for real-time or interactive data access needs.

Example 1: Online Transaction Processing (OLTP)

Imagine a high-volume e-commerce platform. Here, real-time processing of customer transactions and inventory updates is paramount. The frequent updates and random reads involved in managing order fulfillment or product catalogs wouldn’t be well-served by HDFS’s architecture. In this scenario, NoSQL databases like Cassandra or HBase,designed for high-throughput and low-latency operations, would be a more suitable choice.

Small Files and Big Headaches: HDFS and the File Size Dilemma

HDFS manages data in blocks, typically ranging from 64MB to 1GB. While this is efficient for large files, managing a large number of small files (e.g., sensor readings, log files) becomes cumbersome. The metadata overhead associated with each block negates the benefits of HDFS for such workloads.

Example 2: Sensor Data Analysis in an IoT System

Consider an Internet-of-Things (IoT) application collecting temperature data from thousands of sensors spread across a city. Each sensor reading might be a small file. Processing millions of these files efficiently within HDFS would be challenging due to its block-based design. Cloud storage solutions like Amazon S3, optimized for small file storage and retrieval, would be a better fit in this scenario.

Structured Data Seeks Structure: HDFS and the Schema Conundrum

HDFS is designed for storing raw, unstructured data. While schema can be applied later, it’s not inherently enforced.This lack of built-in schema management can become problematic for data that is inherently structured and requires strong data integrity checks.

Example 3: Customer Relationship Management (CRM) System

A CRM system manages customer data like names, addresses, and purchase history. This data is highly structured and requires robust schema enforcement to ensure data accuracy and consistency. Using HDFS for such a system would necessitate additional layers of schema management on top, adding complexity. In this case, a relational database like MySQL or a NoSQL database with a defined schema like PostgreSQL would be a more appropriate choice.

Cost Considerations: Beyond the Hardware Bargain

HDFS offers undeniable cost advantages when storing large datasets on commodity hardware. However, for smaller datasets or situations where frequent data access is necessary, the cost of managing and maintaining the HDFS infrastructure might outweigh the benefits.

Example 4: Log Data Analysis for a Small Startup

A small startup might generate gigabytes of log data daily, but the data volume might not justify the overhead of setting up and managing an HDFS cluster. Cloud storage solutions with pay-as-you-go models might offer a more cost-effective solution for storing and analyzing log data in this scenario.

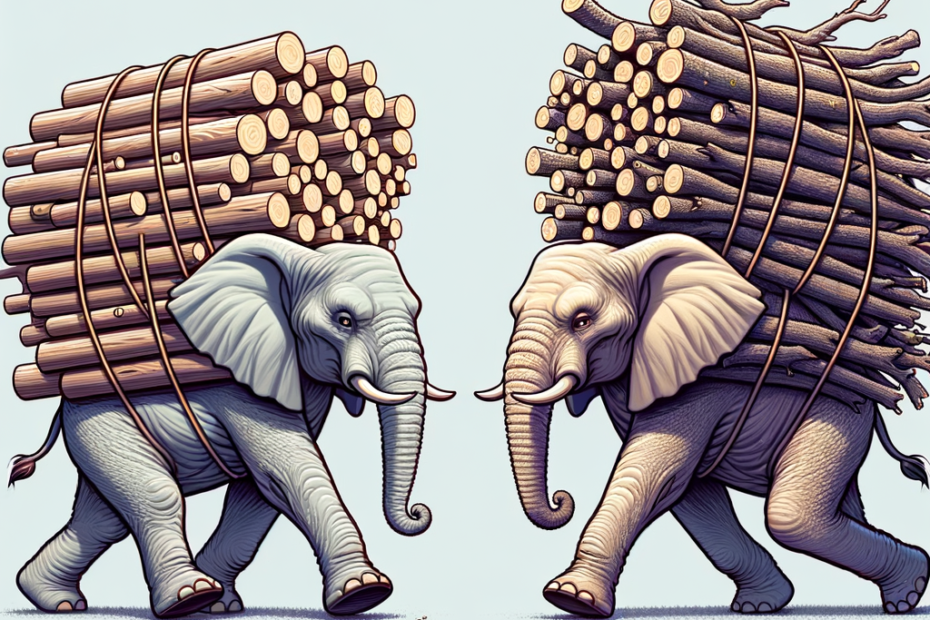

The Power of Choice: Exploring a Hybrid Approach

The beauty of the Big Data ecosystem lies in its diversity. In many cases, a hybrid approach might be optimal. HDFS can remain the backbone for storing massive datasets, while other solutions like NoSQL databases or cloud storage handle specific use cases requiring low latency, random reads, or structured data management.

Conclusion: Choosing the Right Tool for the Job

Selecting the optimal storage solution for your Big Data needs hinges on a comprehensive understanding of your data characteristics and access patterns. Consider factors like data size, latency requirements, access patterns, and cost before making a decision. Remember, HDFS is a powerful tool, but it’s not a silver bullet. By carefully evaluating your specific needs and exploring alternative solutions, you can ensure your Big Data storage strategy delivers optimal performance and cost-efficiency.